Hackers Developing Malicious LLMs After WormGPT Falls Flat

Data Breach Today

MARCH 27, 2024

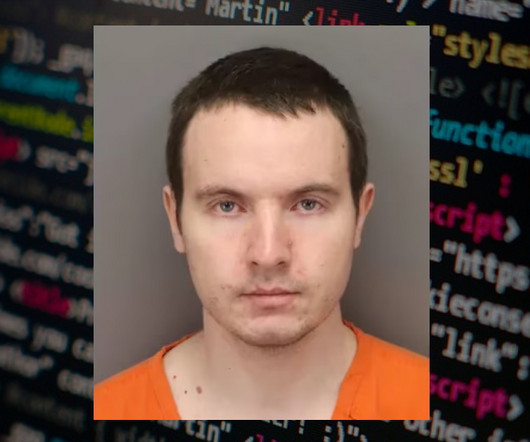

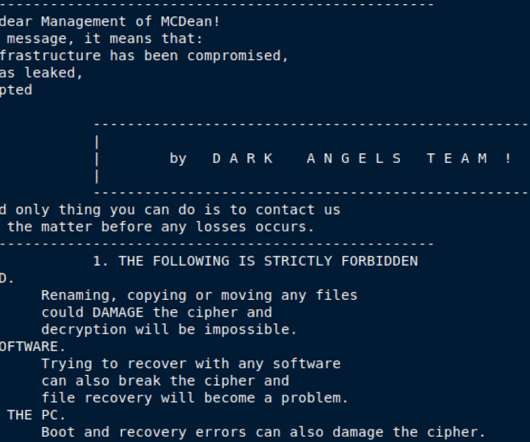

Crooks Are Recruiting AI Experts to Jailbreak Existing LLM Guardrails Cybercrooks are exploring ways to develop custom, malicious large language models after existing tools such as WormGPT failed to cater to their demands for advanced intrusion capabilities, security researchers say.

Let's personalize your content